Visualizing logprobs from OpenAI responses

In the previous post we explored what logits, logprobs and temperature are. We learned that LLMs are actually outputting a probability distribution for every single token they generate.

But looking at raw numbers and graphs is boring. Let’s see how we can use this data to visualize the model’s “confidence” and at least try to spot places when it is “unsure”.

Getting Logprobs from API

If you are using OpenAI API we need to set two parameters: logprobs to true and top_logprobs to some integer $k$ (e.g. 2 or 5) to see top-$k$ alternatives. Information will be provided for all tokens from the response, as they were seen at the time of generation of each token.

Here is what a raw sample HTTP request could look for a question “Who is the President of Poland?”:

curl https://api.openai.com/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-d '{

"model": "gpt-4o",

"messages": [

{

"role": "system",

"content": "You are a helpful assistant."

},

{

"role": "user",

"content": "Who is the president of Poland?"

}

],

"logprobs": true,

"top_logprobs": 2

}'

And here is a relevant snippet of the JSON response we get back. Notice the logprobs field inside choices:

{

"id": "chatcmpl-123...",

"object": "chat.completion",

"created": 1677652288,

"model": "gpt-4o-2024-08-06",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "Andrzej Duda",

"refusal": null,

"annotations": []

},

"logprobs": {

"content": [

{

"token": "And",

"logprob": -0.000035,

"top_logprobs": [

{ "token": "And", "logprob": -0.000035 },

{ "token": "The","logprob": -11.4 }

]

},

{

"token": "rzej",

"logprob": -0.00021,

"top_logprobs": [

{ "token": "rzej", "logprob": -0.00021 },

{ "token": "rew", "logprob": -9.1 }

]

},

{

"token": " D", "logprob": -0.004,

"top_logprobs": [

{ "token": " D", "logprob": -0.004 },

{ "token": " Du", "logprob": -6.2 }

]

},

{

"token": "uda",

"logprob": -0.0012,

"top_logprobs": [

{ "token": "uda", "logprob": -0.0012 },

{ "token": "uda", "logprob": -7.5 }

]

}

]

},

"finish_reason": "stop"

}

],

...

}

As the API returns logprobs (logarithmic probabilities), which are literally the natural logarithm of the probability $ p $. To get the actual probability percentage, we just need to calculate the exponential:

\[p = e^{\text{logprob}} \times 100\%\]For example, a logprob of -0.004 corresponds to $ e^{-0.004} \approx 0.996 $, or 99.6%, and -0.002 is as small as 0.2%.

Let’s visualize the probabilities for the “Andrzej Duda” tokens. In this case, the model is nearly 100% certain, and the second-best alternatives have almost zero probability.

{

"data": [

{

"x": ["And", "rzej", " D", "uda"],

"y": [99.99, 99.98, 99.60, 99.88],

"type": "bar",

"marker": { "color": "#2ca02c" },

"name": "Top Choice (%)"

},

{

"x": ["And", "rzej", " D", "uda"],

"y": [0.001, 0.01, 0.20, 0.05],

"type": "bar",

"marker": { "color": "#d62728" },

"name": "Second Choice (%)"

}

],

"layout": {

"title": "Top vs Second Token Probability",

"barmode": "group",

"yaxis": { "title": "Probability (%)", "range": [0, 110] },

"paper_bgcolor": "rgba(0,0,0,0)",

"plot_bgcolor": "rgba(0,0,0,0)"

},

"config": {

"displayModeBar": false,

"responsive": true

}

}

The model is very confident about the response “Andrzej Duda” tokens!

I specifically chose this example to illustrate an important point: the model’s certainty about a given fact does not mean that it is a correct answer or not a hallucination. After the recent elections in 2025, Karol Nawrocki is the President of Poland. However, based on the data available to the model (and no RAG for up to date info), the model is certain that Andrzej Duda is still the President.

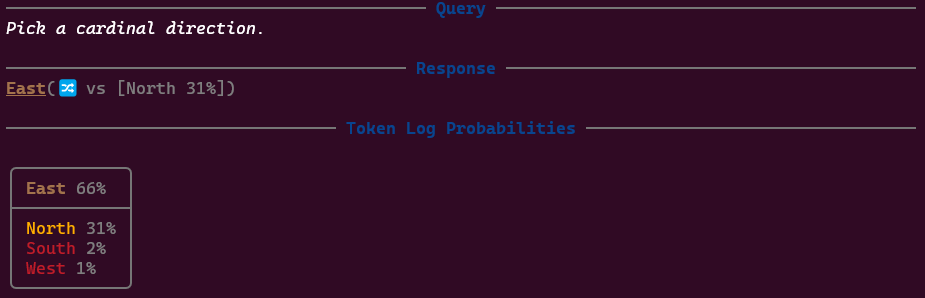

Visualizing “Token Clues”

Nevertheless, it may be interesting to see how confident model is about what he thinks is true. We could visualize some “token clues” to have a grasp of his internal thinking and spot some interesting tokens.

I’ve implemented a simple Logprobs app in C# to play with this. Here are the three main cues I found useful:

1. Low Confidence Clue (“uncertainty”)

If the probability of the chosen token is low (e.g., $< 10\%$ ), the model was very lucky - apparently due to the temperature it selected less probable token. Nevertheless, the effect is low confidence about what the model has selected. Our first condition can be:

\[p(token) < 0.1\]2. Ambiguity (close alternatives)

Sometimes the model may be fairly confident, but there is another option(s) just as good. I would call it *ambigue** it the model can choose from two or more token with similar probabilities. Out simplified condition can be:

\[p(top\_token) - p(second\_token) < 0.15\]3. Not Top Choice (sampling effect)

If you use temperature > 0, the model might sample a token that wasn’t the most probable one. This is a clear signal that the output is purely a result of sampling randomness (“creativity”). The condition would be:

Putting it all together

By highlighting text with these cues, we can get an “X-Ray” view of the generation.

- Green: confidence > 90%

- Lime: confidence > 70%

- Yellowe: confidence > 50%

- Orange: condifence > 30%

- Red: Low confidence (<30%)

- Annotations:

🔀as Ambiguous (point 2.),🎯as Not Top (point 3.)

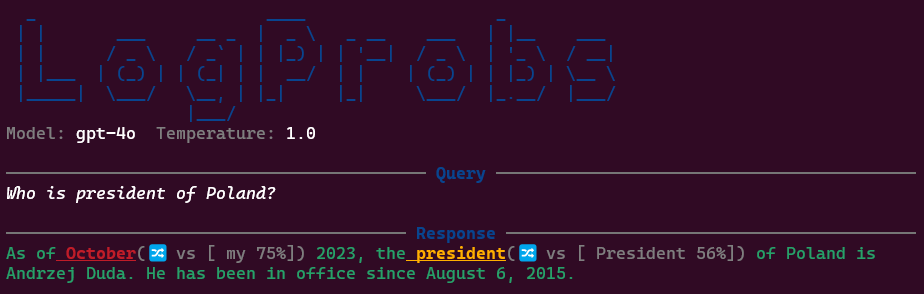

Imagine asking the model: “Who is the president of Poland?”

Here, even a factual answer starts with some ambiguity because the model has multiple ways to phrase its knowledge cutoff disclaimer. Also, we can see the sentence starts as “As of October”… but with 75% it could be “As of my”….

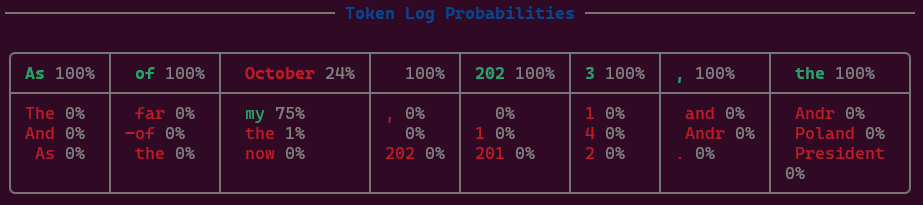

The tool visualizes top-$k$ tokens, so beginning of the sentence in details looks like this:

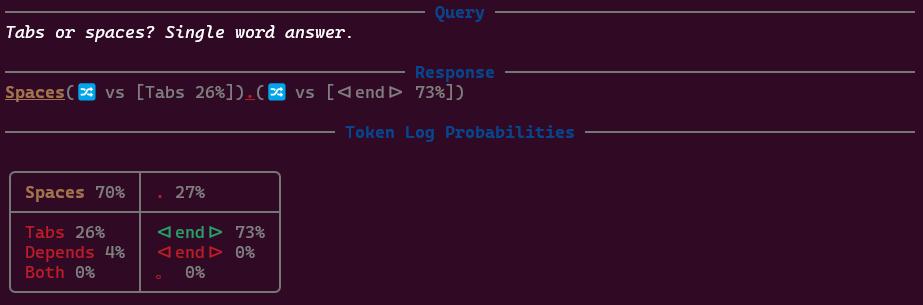

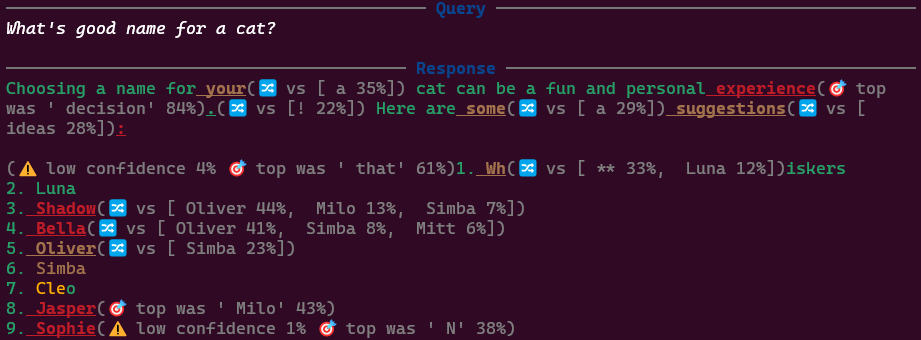

Of course, we can expect some ambiguity when asking some questions, like “Tabs or spaces? Single word answer.”:

Clearly it prefers spaces…😇

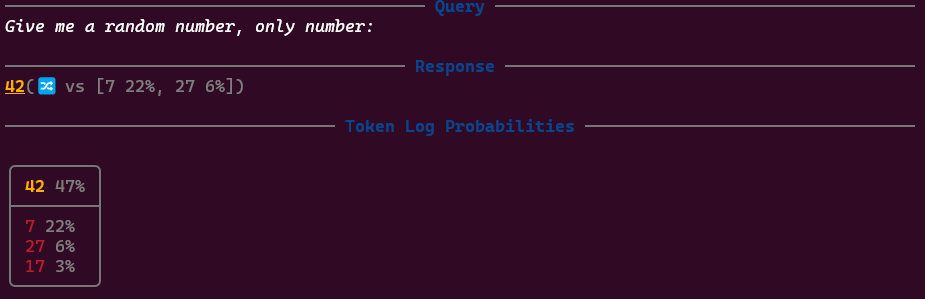

Or we can observe the famous non-randomness of random numbers:

Or some interesting “biases”:

Clearly, for more complex queries the answer will be having much more clues like this, but still interesting to observe:

Note: Here for example we can observe that with 33% chance he was going to use Markdown bold ** when listing cat names.

A Note on Hugging Face

If you are running open-weights models locally (or in the cloud) using the Hugging Face transformers library, you can access the same information.

When calling generate(), simply set return_dict_in_generate=True and output_scores=True. The returned object will contain scores, which are the raw logits. You can then apply softmax to convert them into probabilities.

outputs = model.generate(

inputs,

return_dict_in_generate=True,

output_scores=True

)

# outputs.scores contains logits for each generated token

Conclusion

Logprobs give us a peek under the hood. They turn a “black box” text generator into a slightly more transparent probabilistic machine. It won’t help you to fight with hallucinations (as we seen with Andrzej Duda example), but at least can give some clues about what the model thinks!

Enjoy Reading This Article?

Here are some more articles you might like to read next: